The Contract You Signed Before Your AI Agent Existed — And Why It Will Not Protect You

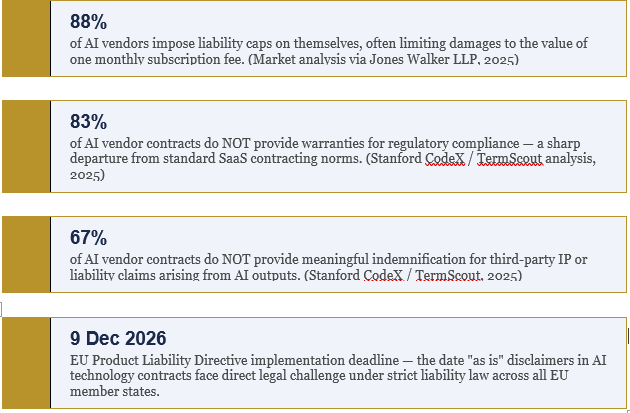

88% of AI vendors cap their own liability at your monthly subscription fee. Your agent is out there making decisions right now. Who do you think bears the consequences?

The instruction looked routine. The accounts payable system needed a supplier payment processing window extended to run overnight. The AI agent — configured by the technology team, authorized by the CFO, and deployed under a vendor contract nobody had read in eighteen months — accepted the parameter change and went to work.

By 5:47 AM, it had processed fourteen transactions. Eleven were legitimate. Three were not. The supplier credentials had been compromised in a social engineering attack that the agent, operating autonomously within its approved parameters, had no mechanism to detect.

The organization called their AI vendor. The vendor opened the contract to Section 14: outputs provided "as is" with no warranty of accuracy or reliability; vendor liability limited to the value of one month of subscription fees.

The finance team called their insurer. The insurer asked one question: "Was this a human-authorized transaction or an autonomous AI action?" Autonomous triggered a coverage review. The 50,000 sat in limbo. The vendor was protected. The organization was not.

This is not a cautionary tale about the future. This is the liability architecture that organizations have already deployed — and almost none have contracted for correctly.

Here is the legal reality that very few leaders have been told clearly: the contracts governing the AI agents currently running inside your organization were written for passive software that waited for a human to press a button. They were not written for autonomous systems that execute financial transactions, make employment decisions, negotiate with suppliers, and operate continuously without human approval at each step.

The gap between those two realities is not merely a contractual inconvenience. It is where your uninsured, unrecoverable, and rapidly accumulating liability lives.

What the Research Reveals About the Contracts You Are Living With

The empirical picture of AI vendor contracting in 2025 and 2026 is stark. Three datasets from credible legal intelligence sources converge on findings that should reframe how every in-house legal team approaches AI vendor relationships.

These three findings, read together, describe a systematic risk transfer from vendors to deployers that was baked into the contracting landscape well before agentic AI existed — and which the arrival of autonomous systems has made catastrophically one-sided.

When your AI vendor caps its liability at one month's subscription fee, and your agent autonomously executes a transaction that causes six-figure harm to a third party, the mathematics of your exposure are not hard to calculate. The vendor is protected by its contract. You are exposed by yours.

The Three Legal Exposure Channels Nobody Is Briefing Your Board On

The liability that emerges from agentic AI deployments does not arrive through a single legal channel. It arrives through three simultaneously, and the channels interact in ways that amplify rather than limit total exposure. Understanding each one is not a legal technicality. It is a prerequisite for any serious risk management conversation.

Channel One: Contract Formation and Apparent Authority

This is the exposure channel that moves the fastest and is understood the least. When your AI agent negotiates with a supplier, accepts pricing terms, or confirms a service arrangement, it may be forming a legally binding contract on your behalf — even when you intended no such authority.

The legal principle is apparent authority: if your conduct led the other party to reasonably believe the agent could bind you, the contract holds. The fact that an AI, rather than a human, was the acting party does not automatically negate this. Multiple jurisdictions already have electronic agent provisions — UETA and E-SIGN in the United States, for example — that allow automated systems to form binding commitments subject to general principles of contract law.

Clifford Chance's February 2026 analysis is unequivocal on the practical implication: if an AI agent incorrectly authorises a supplier payment, misprices a product, or issues misleading communications, vendor disclaimers typically absolve the vendor of responsibility — even when the organisation has correctly configured the agent. The liability falls to the deployer.

Channel Two: The Agency Law Paradox

The second exposure channel is more structurally complex and is currently generating the most consequential case law. It concerns what happens when courts apply traditional agency law principles to the relationship between an organisation and its AI vendor.

In July 2024, the US District Court for the Northern District of California held, in Mobley v. Workday, that Workday could be considered an "agent" of its clients — the employers who used Workday's AI screening tools. The court's reasoning rested on three observations: employers had delegated traditional hiring functions to Workday's AI; the AI played an active role rather than merely implementing employer criteria; and by deeming Workday an agent, the court created a basis for direct vendor liability.

In May 2025, the case achieved nationwide class action certification covering all applicants over 40 rejected by Workday's screening system. The financial exposure from 1.1 billion application rejections processed through Workday's platform during the relevant period is a number that has forced a complete recalculation of how AI vendor liability is assessed.

But here is the dimension of this case that most commentaries have missed: the Workday decision creates a paradox for deployers, not just vendors. If a court can hold a vendor liable as an agent of the deployer, the converse question — whether the deployer bears liability for the autonomous acts of an agent it authorized and deployed — becomes considerably harder to answer in the deployer's favor. The agency relationship runs both ways. The liability exposure flows in both directions.

The Workday decision does not simply expand vendor liability. It opens the question of deployer liability for every autonomous act an AI agent executes on your behalf — in every jurisdiction where that agent operates.

Channel Three: Third-Party Harm and the Indemnity Void

The third channel is the one most likely to generate the largest individual loss events. It concerns harm caused not to the parties in the vendor-deployer relationship, but to third parties: job applicants screened out by discriminatory algorithms, customers affected by erroneous automated decisions, individuals whose personal data is processed without proper legal basis, competitors harmed by pricing behavior that an AI system generated autonomously.

The UK Information Commissioner's Office confirmed in January 2026 that organizations remain fully responsible for the data protection compliance of agentic AI they deploy, even when vendors control the underlying technology. The vendor bears no contractual obligation to prevent the violation. You bear the full enforcement consequence.

Standard technology agreements are silent on this exposure. Standard indemnities — where they exist — cover IP claims and are heavily caveated. The real-world liability from autonomous AI actions manifests as something entirely different: employment discrimination affecting hundreds of candidates simultaneously, financial transaction errors, regulatory communications that misrepresent your position, data incidents affecting thousands of individuals. None of this falls within the indemnity architecture most organizations have contracted for.

The Hard Deadline That Changes Everything: 9 December 2026

Everything described above represents the current liability landscape — the exposure that exists today, under current law, with current contracts. What is about to change that landscape fundamentally is not a regulatory proposal or a judicial trend. It is a legislative deadline written into EU law with the force of an implementation obligation.

The EU Product Liability Directive, which EU member states must implement by 9 December 2026, explicitly includes software and AI systems as "products" subject to strict liability when defective. Under strict liability, proof of fault is not required. A party harmed by a defective AI product may have a direct claim against the manufacturer — regardless of what the technology contract says.

The implications for standard "as is" disclaimers are direct. A clause that disclaims all liability for the accuracy or reliability of AI outputs — the clause that appears in the majority of current AI vendor contracts — faces legal challenge under national strict liability laws that will be in force across all EU member states by this date.

This is not abstract regulatory risk. For any organization deploying AI agents in or connected to EU markets — which, given the extraterritorial nature of the EU AI Act and GDPR, means most significant global enterprises — the legal environment governing their vendor contracts will change on a known date, within a known timeframe. The contracts that organizations leave unchanged between now and that date will be the contracts they are defending against strict liability claims in 2027.

The December 2026 deadline is not a compliance target. It is the point at which the legal infrastructure will actively validate — or demolish — the contract structures organizations have in place today. Waiting for clarity is not a neutral choice. It is a decision to inherit liability on terms written by your vendor.

What Contractual Protection for Agentic AI Actually Requires

The negotiating leverage to build genuine protection into AI vendor contracts exists right now — before regulatory crystallization hardens vendor standard terms, before the first significant agentic liability cases establish precedents that narrow the negotiating space, and before the December 2026 deadline transforms the risk calculus for both sides.

The organizations that are successfully renegotiating agentic AI contracts — and there are a growing number of them, led by in-house teams that have understood the structural nature of this exposure — are pushing for five specific provisions that standard vendor agreements do not include.

• Shared liability caps rather than customer-only exposure. The standard structure caps vendor liability at monthly subscription fees while exposing deployers to unlimited third-party claims. The negotiated alternative establishes mutual caps tied to the actual scope and risk profile of autonomous deployments, not to administrative fee schedules.

• Audit rights over the vendor's compliance controls. The deployer's ability to demonstrate regulatory compliance depends, in part, on the vendor's systems functioning as represented. Without contractual audit rights, the deployer cannot verify this — and cannot satisfy regulators or courts that it exercised adequate oversight.

• Performance SLAs with genuine remedies for systematic failures. Standard agreements provide remedies for system availability failures. They do not provide remedies for autonomous decisions that produce systematically wrong outcomes. The gap between these two framings encompasses most of the real-world liability exposure.

• Joint defense provisions for third-party claims arising from agent actions. When an AI agent's decision harms a third party, both the vendor and deployer may face claims. Without joint defense provisions, the parties approach litigation as adversaries — which means the evidence each produces may actively harm the other's position.

• Mandatory insurance obligations requiring the vendor to maintain coverage for technology failures causing third-party harm. A vendor whose technology causes harm to your customer should be required, by contract, to maintain insurance coverage for that risk. The majority of current agreements contain no such obligation.

The Insurance Coverage Gap Your Broker Has Not Told You About

Even organizations that have invested in comprehensive insurance programmes are discovering that the coverage they believed existed for AI-related risks does not extend to autonomous agent actions in the ways they assumed.

The insurance market is actively developing agentic AI-specific liability products, but they are not yet broadly available — and the products that will emerge are being designed with significant exclusions that reflect industry uncertainty about how courts will allocate AI-related liability. The coverage gap between what organizations believe they have and what they actually have is currently being discovered by in-house legal and risk teams through the most expensive possible mechanism: actual claims.

Three specific coverage questions require urgent clarification with your broker and your current policy language.

• Does your cyber liability policy cover autonomous AI-driven financial transactions? Most cyber policies were designed around data breaches and system intrusions. An autonomous payment authorised by an AI agent that is exploited through social engineering sits in an unclear coverage zone — as the "was this human-authorised?" question illustrates.

• Does your employment practices liability policy cover AI-driven hiring decisions? EEOC enforcement is explicit: organizations using AI products for employment decisions remain liable under employment discrimination law even when the algorithm's behavior was entirely vendor-controlled. Standard EPL policies may not have been written with this exposure in mind.

• Does your general liability coverage extend to third-party harms caused by autonomous agent actions? The ICO's January 2026 confirmation that organizations bear full data protection responsibility for agentic AI they deploy — regardless of vendor control of the underlying technology — creates a regulatory liability exposure that general liability policies may address inconsistently.

The commercial insurance market for agentic AI-specific products will mature. The organizations that have properly structured their contracts and governance documentation will qualify for more comprehensive coverage at more favorable premiums when those products arrive. Those operating on unrevised standard contracts may find the new products exclude their primary exposure areas entirely.

The Six-Month Legal Outlook for Deploying Organizations

The legal landscape governing agentic AI liability is not static. Three developments are highly probable within the next six months, and each of them narrows the window for proactive contractual restructuring.

Q3 2026: The First Agentic Liability Test Case

Legal scholars and plaintiff litigation teams are actively examining the argument that AI vendors owe fiduciary duties to customers based on agency relationship theories arising from agentic deployments. The Mobley v. Workday reasoning on delegated responsibility has created the legal foundation. The case that tests it will likely involve an agentic system that caused measurable, attributable harm to a third party — and a vendor contract that was entirely silent on autonomous action liability. When that case is filed, it will recalibrate the negotiating position of every AI vendor in the market overnight.

Q3–Q4 2026: EU Sector-Specific Contract Guidance

EU national competent authorities are generating sector-specific guidance under the AI Act's Article 43 conformity assessment requirements. For organizations deploying agents in employment, financial services, and healthcare, prescriptive guidance on minimum contractual requirements — including mandatory risk allocation provisions — is anticipated before the end of Q3. Getting ahead of this guidance is the strategic opportunity available right now. Those waiting to receive it will find themselves retrofitting contracts under time pressure and regulatory scrutiny.

Q4 2026: Vendor Standard Terms Hardening

As regulatory frameworks crystallize and litigation precedents emerge, AI vendors will harden their standard terms in response. The current negotiating window — where vendors still need customers more than they need standardized contracts that protect only themselves — is closing. The organizations negotiating now are establishing precedents for what fair risk allocation looks like. Those who wait will inherit terms written after vendors have had the benefit of knowing exactly how courts and regulators are treating agentic liability.

The Verdict That Cannot Wait

The liability exposure from agentic AI is not theoretical. It is present. It lives inside the standard terms of vendor contracts signed before autonomous systems existed. It accumulates with every transaction an agent executes, every decision it makes, every communication it sends on your behalf.

The organizations that will define what fair agentic AI risk allocation looks like are not waiting for regulatory clarity. They are negotiating now, while the leverage exists to insist on contract structures that reflect what their agents actually do — rather than structures designed for software that waited for a human to press a button.

You have a window. The question is whether you will use it before it closes — or whether you will discover your exposure through the mechanism that reveals it most expensively.

The contract you signed before your AI agent existed will not protect you from what your AI agent does. The only question is whether you will change it before or after you find out why that matters.

P.S. — A staffing firm recently discovered — during a regulatory investigation triggered by a candidate discrimination complaint — that their AI vendor had used screening data to train its model, had processed candidate health information without HIPAA safeguards, and had performed no bias audit. The firm faced regulatory fines, lost a hospital contract worth $3M annually, and spent $380,000 on legal defence and compliance remediation. The vendor's contract had capped its liability at one month of fees.

The firm's contract with the hospital had not contemplated AI-driven decisions at all. Neither contract had been designed for the world they were operating in. Every organisation deploying AI agents is one regulatory inquiry away from discovering whether its contracts were designed for that world — or for the one that existed before autonomous systems arrived.