The Regulatory Stack Is Not Five Separate Problems-It Is One Compounding One

EU AI Act. Colorado AI Act. And the single governance architecture that addresses all of them simultaneously — because duplicate compliance infrastructure is the most expansive mistake tight now

TL;DR

The organizations building separate compliance workstreams for each AI regulatory framework are building redundant infrastructure at compounding cost.

The EU AI Act (full enforcement 2 August 2026), Colorado AI Act (effective 30

June 2026), ABA Formal Opinion 512, ISO/IEC 42001, the Caremark board governance standard, and the insurance underwriting requirements all share a

common core: documented AI inventory, documented risk assessment, documented human oversight, and documented accountability chains. One unified governance architecture — built once, mapped to each framework's specific language — satisfies all six simultaneously.

This brief map where the frameworks overlap, names the compliance cost of treating them separately, and delivers the three diagnostic questions that reveal whether your organization is building intelligently or expensively. The full multi-framework compliance mapping, the unified governance build sequence, and the 90-day implementation protocol are behind the paywall.

The Compliance Director's Monday Morning. Six Workstreams. One Organization. Zero Coordination.

A mid-size financial services company. Eighty employees in legal, compliance, and risk. Two AI systems deployed in underwriting and one in customer service. A compliance calendar that, as of Q1 2026, includes the following simultaneous workstreams:

A dedicated EU AI Act high-risk compliance project, staffed with two compliance officers and an external consultant, targeting the 2 August 2026 deadline for Annex III systems. A separate Colorado AI Act implementation project — a different team, different consultant, targeting the 30 June 2026 deadline.

An ABA ethics governance review for the legal department's AI tools, being handled by in-house counsel with no visibility into the other two projects. An insurance coverage audit being conducted by the risk team following the January 2026 policy renewals. A board AI governance initiative following the Brief 5 Caremark analysis, led by the GC. And an exploratory ISO/IEC 42001 certification discussion triggered by a procurement client requirement.

Six workstreams. Six teams. Six consultants. Six deliverables. Not one of them coordinating with the others.

The EU AI Act workstream has mapped the underwriting AI system's risk classification and is preparing technical documentation. The Colorado workstream is conducting an impact assessment on the same AI system. They are not sharing documentation. The ABA review identified a human oversight gap in the legal department's AI tools.

The Caremark initiative is building a board AI governance architecture that requires the same AI inventory both other workstreams need. The insurance audit found an AI exclusion in the E&O policy that the board initiative needs to address. And the ISO 42001 discussion revealed that certification requires governance documentation that all five other workstreams are separately generating.

The total projected compliance spend: $340,000 over six months. The total projected spend if the governance documentation had been built once, as a unified architecture, and mapped to each framework: $95,000. The difference — $245,000 — is the cost of the most common mistake in AI governance today: treating the regulatory stack as five separate problems.

Every compliance conversation treats each AI regulation as a separate workstream. The cost of that approach is not only duplicate effort. It is inconsistent documentation, gaps between frameworks that each framework independently requires, and missed opportunities to use a single governance investment to satisfy six compliance obligations simultaneously.

Six Frameworks. One Compliance Obligation. The Architecture They All Require.

The six frameworks below represent the principal AI governance obligations facing organisations with material AI deployment in 2026. Each has its own specific language, its own enforcement mechanism, its own penalty structure, and its own deadline. But beneath the surface differences, each framework is asking the same foundational questions:

Do you know what AI you are running? Have you assessed its risks? Do you have human oversight in place? Can you demonstrate accountability for its outputs?

The organization that can answer those questions with documented, auditable evidence has satisfied the core of every framework on the list — simultaneously, with a single governance investment, rather than six separate ones.

EUROPEAN UNION · REGULATION (EU) 2024/1689

EU Artificial Intelligence Act

Deadline: 2 August 2026 — full enforcement of Annex III high-risk AI provisions. GPAI obligations since August 2025.

Maximum Penalty: Up to €35 million or 7% of global annual turnover for prohibited practices. Up to €15 million or 3% for high-risk non-compliance.

Triggers When: AI system is deployed in Annex III high-risk categories — employment, credit, education, law enforcement, essential services, biometrics — and serves any person in the EU, regardless of where the deploying organization is based.

→ AI system risk classification and documentation (Annex III categorization)

→ Quality management system with documented AI governance procedures

→ Technical documentation for each high-risk AI system including intended purpose, architecture, training data documentation, and risk assessment

→ Conformity assessment process — internal for most Annex III systems, notified body assessment for some

→ EU database registration for high-risk AI systems before deployment

→ Human oversight measures — specific requirements for deployers to implement and monitor

→ Post-market monitoring system with incident reporting obligations

→ Transparency obligations under Article 50 — disclosure when interacting with AI systems

UNITED STATES · COLORADO SB 24-205

Colorado Artificial Intelligence Act

Deadline: 30 June 2026 — effective date for all developer and deployer obligations after delay from original 1 February 2026.

Maximum Penalty: Violations constitute unfair trade practices under Colorado Consumer Protection Act — AG enforcement with significant financial and reputational exposure. Up to $20,000 per affected consumer in some scenarios.

Triggers When: AI system makes or is a substantial factor in making a consequential decision affecting Colorado residents in employment, housing, credit, healthcare, education, insurance, government services, or legal services.

→ Risk management policy and program documenting principles, processes, and personnel for identifying algorithmic discrimination risks

→ Annual impact assessments evaluating whether the high-risk AI system has caused or is likely to cause algorithmic discrimination

→ Consumer notification before consequential AI-assisted decisions are made → Consumer disclosure of adverse AI-assisted decisions with plain-language explanation

→ Consumer appeal mechanism with human review where technically feasible

→ 90-day notification to Colorado AG and known deployers if algorithmic discrimination discovered or credibly reported

→ Public statement on website or use case inventory describing high-risk systems deployed and discrimination risk management approach

→ Three-year record retention for all impact assessments and compliance records

UNITED STATES · ABA FORMAL OPINION 512 (JULY 2024)

ABA Rules of Professional Conduct — AI Governance

Deadline: Immediately applicable to all lawyers using generative AI in legal practice. No grace period — obligations engage at the moment of AI tool use.

Maximum Penalty: Professional discipline ranging from reprimand to disbarment. Malpractice liability. Secondary liability for supervising attorneys under Rules 5.1 and 5.3.

Triggers When: Any lawyer uses generative AI tools in connection with client representation — for research, drafting, analysis, communication, or any other legal task.

→ Technological competence: reasonable understanding of AI tool capabilities and limitations including hallucination risk (Rule 1.1)

→ Confidentiality protection: ensuring client data is not shared with AI platforms whose terms permit training on user inputs (Rule 1.6)

→ Informed client communication: disclosure when AI is used materially in representation (Rule 1.4)

→ Output verification: independent review of all AI-generated content before submission or delivery to clients (Rules 1.1, 3.3)

→ Candor to tribunal: disclosure of AI use per applicable court rules; verification that all AI-generated citations exist and stand for cited propositions (Rule 3.3)

→ Supervision policy: firm-level policies governing AI use across all lawyers and non-lawyer staff (Rules 5.1, 5.3)

→ Fee transparency: no charging full hourly rates for tasks AI completed in a fraction of standard time (Rule 1.5)

INTERNATIONAL · ISO/IEC 42001:2023

AI Management System Standard — Certification

Deadline: No mandatory deadline — but increasingly required by procurement contracts, insurance underwriters, and financial institution counterparties as a condition of engagement.

Maximum Penalty: No direct regulatory penalty — but failure to achieve ISO 42001 certification is becoming a commercial disqualifier in regulated procurement contexts and an underwriting criterion for affirmative AI insurance coverage.

Triggers When: Organization deploys AI in any context and seeks to demonstrate governance maturity to clients, counterparties, insurers, or regulators through independent certification.

→ Documented AI management system scope and policy statement

→ AI risk assessment framework aligned with ISO 42001 Annex A controls

→ AI impact assessment methodology covering ethical, legal, and operational risks

→ Defined human oversight mechanisms for all AI systems in scope

→ AI system inventory with risk classification for each system

→ Supplier and third-party AI governance requirements

→ Internal audit program for the AI management system

→ Management review process and continuous improvement documentation

→ Competence and awareness training records for AI governance personnel

Why Organizations Treating Each Framework Separately Are Building the Most Expensive Compliance Architecture in Their History

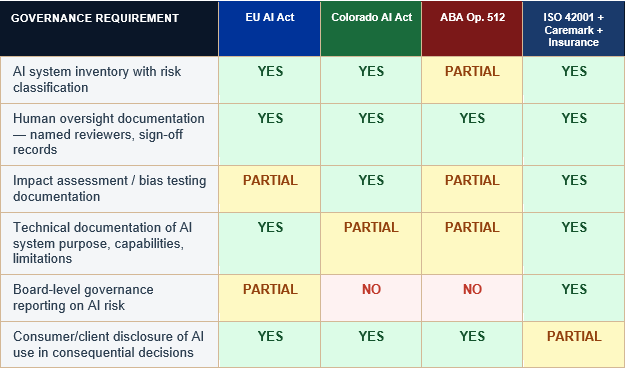

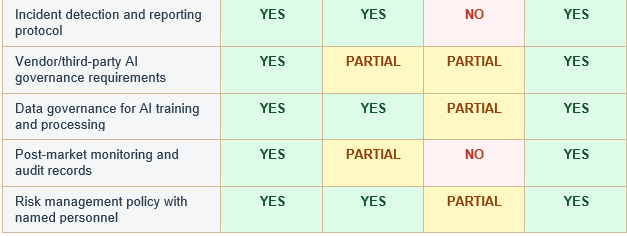

The financial case for unified governance architecture is not subtle. The table below maps the documentation that each of the six frameworks requires against a unified governance build — and shows where a single governance investment satisfies multiple simultaneous requirements.

The cost duplication occurs across three dimensions.

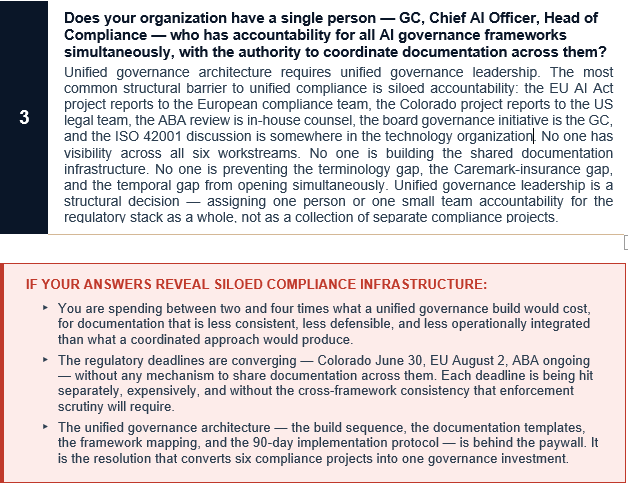

First, there is the direct financial duplication: six separate projects, six separate consultants, six separate documentation sets, with each team unaware that the others are producing overlapping deliverables.

Second, there is the inconsistency risk: documentation produced separately for different frameworks uses different language, different risk assessments, and different governance terminology. When a regulator or litigant puts the documents together — as they will in any serious enforcement action — the inconsistencies become evidence of governance that was performative rather than structural.

Third, there is the gap risk: organizations building separate compliance frameworks for each regulation are almost inevitably producing documentation that satisfies each framework's specific language while leaving gaps in the underlying governance architecture that no single framework closes. The EU AI Act may be satisfied without the Caremark board reporting the board governance standard requires. The ABA compliance may be complete without the insurance documentation the underwriter requires. The gaps between frameworks are where the real exposure lives.

Organizations trying to address each framework separately are building redundant compliance infrastructure at compounding cost. The intelligence insight: EU AI Act, Colorado AI Act, ABA Opinion 512, ISO 42001, Caremark, and insurance underwriting all share a common foundational core. One unified architecture, built to that core, satisfies all six simultaneously.

Where One Governance Investment Satisfies Multiple Framework Requirements

The matrix below maps six core governance documentation requirements against the six principal AI frameworks. Where a requirement satisfies a framework, it is marked YES. Where the framework has relevant but distinct requirements, it is marked PARTIAL. Where no overlap exists, it is marked NO. A blank indicates the framework does not specifically address the requirement.

The matrix makes the unified governance case visually explicit. AI inventory, human oversight documentation, risk management policy, and data governance each appear across four or more of the six framework columns. An organization that produces these four core deliverables as a unified governance document — not as six separate framework-specific versions — satisfies the majority of every framework's foundational requirement with a single investment.

The differences between frameworks are real and require framework-specific attention. The EU AI Act's technical documentation requirements, the Colorado Act's consumer notification and 90-day AG disclosure obligation, the ABA's fee transparency standard, and the board reporting format for Caremark all require specific language and specific format.

But the underlying governance reality they describe — the AI inventory, the risk assessment, the oversight mechanism, the accountability chain — is the same governance reality. Build it once. Map it to each framework's language. Produce six compliant documents from one governance architecture rather than six separate governance architectures each producing one.

THE THREE GAPS THAT SILO COMPLIANCE ALWAYS LEAVES OPEN

▸ Gap 1 — The Terminology Gap: Documentation produced for the EU AI Act uses the EU's defined terms — 'deployer,' 'high-risk system,' 'conformity assessment.' Documentation produced for Colorado uses Colorado's terms — 'consequential decision,' 'algorithmic discrimination,' 'impact assessment.' Documentation produced for ABA compliance uses professional responsibility language. A regulator reviewing all three finds three different descriptions of the same governance reality. The inconsistency is not compliance gap — it is evidence of governance designed for regulators rather than for operational integrity.

▸ Gap 2 — The Caremark-Insurance Gap: Most compliance programs produce EU AI Act and Colorado AI Act documentation without connecting it to the Caremark board reporting standard or the insurance underwriting requirements. The board is not receiving AI risk reports in the format Brief 5 describes. The insurance documentation does not reflect the governance controls the insurer requires. Two compliance workstreams that satisfy their target regulations leave the board exposed and the coverage gap open.

▸ Gap 3 — The Temporal Gap: EU AI Act compliance documentation is built for August 2026. Colorado documentation is built for June 2026. ABA compliance is built for now. ISO 42001 certification is built over twelve to eighteen months. Each workstream has a different timeline and a different definition of 'done.' The organisation that reaches August 2026 with EU AI Act compliance complete but ABA ethics governance still outstanding has not built governance — it has sequenced regulatory checkbox exercise.

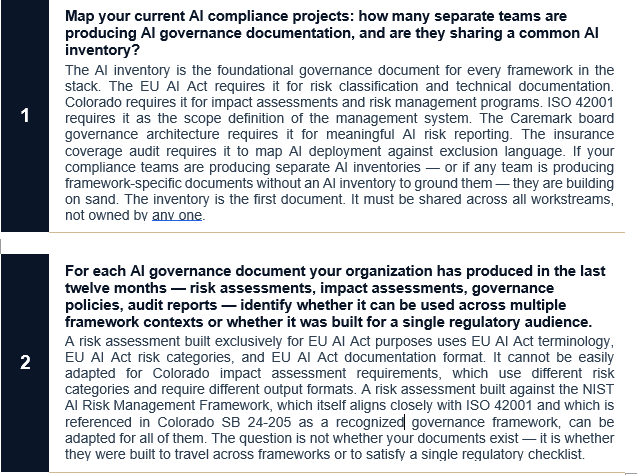

3 Questions That Reveal Whether You Are Building Intelligently or Expensively

The diagnostic for unified governance is not a regulatory audit. It is a coordination audit — revealing whether the compliance investments already being made are producing shared governance infrastructure or siloed regulatory outputs.

The Unified Governance Architecture: Building Once for All Six Frameworks

The three diagnostic questions locate the siloed compliance problem. What follows is the complete resolution — the build sequence that produces unified governance documentation satisfying all six frameworks, the framework-specific mapping that adapts the core architecture to each regulatory language, and the 90-day implementation protocol that converts the insight into execution.

This is not an aspirational governance framework. It is an operational build plan, sequenced by priority and deadline, designed to be executed by an organization that has identified the silo problem and is ready to address it structurally rather than incrementally.

What follows is the complete multi-framework compliance mapping methodology, the overlap matrix that reveals where one governance investment satisfies multiple regulatory obligations simultaneously, and the unified governance architecture that is defensible across EU AI Act, Colorado AI Act, ABA Opinion 512, ISO 42001, Caremark, and insurance frameworks — through a single coordinated build.

Keep reading with a 7-day free trial

Subscribe to Law + Koffee to keep reading this post and get 7 days of free access to the full post archives.